As the service life of a data center increases, more and more IT equipment is usually added to run the business, and the corresponding cooling conditions may not be able to meet existing requirements. This will cause the ambient temperature of the data center to rise rapidly, affecting IT Normal operation of the device. Especially in traditional room-level cooling data centers, the cooling capacity of air conditioners is often not 100% utilized.

How to maximize the performance of cooling system to protect the normal operation of IT equipment, not only can better use existing data Center, also can save the costs?

The key to improving the efficiency of the cooling system is to increase the return air temperature of the air conditioner, so that all the cold air flow is heated up by the IT equipment and then returned to the air conditioner without air flow short circuit.

Check the existing cooling system.

After the existing cooling system has been used for a long time, due to various factors, the cooling capacity may decrease. Factors that may affect the cooling capacity should be checked and repaired, such as dirty filters, insufficient refrigerant, and the Accumulation of ash or scale, etc. Removing these adverse effects will help restore the cooling capacity.

Don’t worry about proper temperature rise.

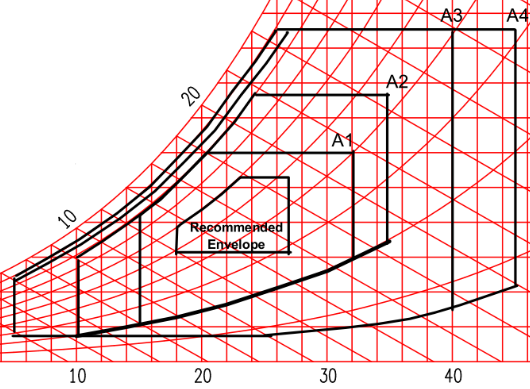

For IT equipment, it’s not as fragile as people think. If the highest temperature reading at the front of the rack is less than 27°C (81°F), it’s within the optimal temperature range of ASHRAE TC 9.9 recommendation. Even if the intake air temperature is slightly higher than 32°C (90°F), it still meets the A1 standard. Most IT equipment can be safely operated at this temperature (the inlet air temperature of most IT equipment can reach 35°C (95°F)). The temperature at the rear of the cabinet is usually 10°C (50°F) higher than the front of the cabinet. Even if the temperature reaches 40°C (104°F), do not use a fan to cool the rear of the rack. Hot air is mixed into the cold air channel and affects the cooling effect.

Install IT equipment properly.

Measure temperature at the top, middle, and bottom of the front of racks. The top temperature of the rack is usually the hottest. If the bottom of the rack is cold and has open rack space, you can try rearranging the more powerful IT equipment near the bottom of the rack (the coldest area).

Use windshields to isolate hot and cold air.

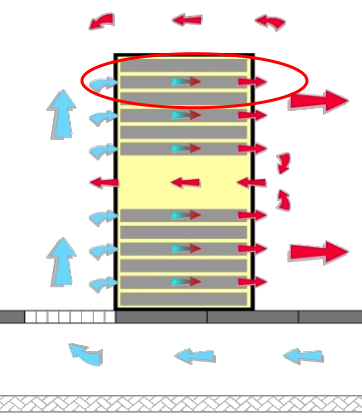

Be sure to use a blanking plate to block all unused open spaces at the front of the rack. This will prevent hot air from recirculating from the back of the rack to the front of the rack to create local hot spots.

Avoid cables from blocking the rack’s return air.

If a lot of messy cables are used at the front or rear of the cabinet, it is likely to increase the fan pressure and power of the IT equipment and cause the IT equipment to overheat, even if there is sufficient cold air in front. You should consider using short power cords and network cables, and arrange them in the cabinet to avoid obstructing airflow.

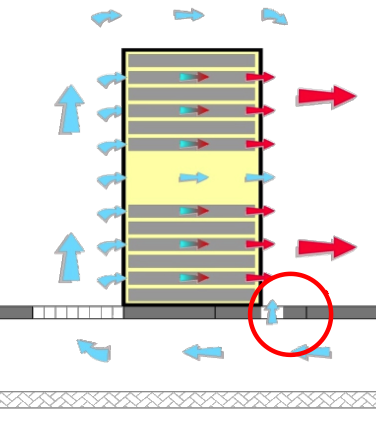

Proper use of raised floors.

If you used the raised floor to supply air, you need to ensure that the ventilation floor is properly distributed in the data center area. Do not set the ventilation floor at the rear of the rack. For higher power rack, you can increase the through-hole rate of the ventilation floor at the front of the rack, or use Ventilation floor with air-guiding function or fan; Seal all floor openings  except the rack air intake channel as much as possible to prevent waste of cold air.

except the rack air intake channel as much as possible to prevent waste of cold air.

Watch for obstacles under the raised floor.

If the air is sent under the elevated floor, attention should be paid to the cable trough or other obstacles under the floor. Obstacles such as the cable trough under the elevated floor will significantly affect the cold air flow and cause uneven distribution of cold air in the data center. In a remote place, the cooling capacity is insufficient and the temperature is too high. At this time, you should try to remove the obstacles to make the cooling system air supply smoother. In combination with temperature measurement, place more IT equipment in areas with lower temperature (sufficient cooling).

Reasonably plan the air inlet and air outlet of the duct air supply system.

If there is an elevated duct air supply system in data center, ensure that the cold air outlet is directly at the front of the rack and the return duct is above the hot aisle. If the air vents and return air vents in the data center are not positioned well, it will cause disorder of the return air flow organization, reduce the cooling efficiency and overheat. The key is to ensure that the hot air from the back of the cabinet can return directly to the room air conditioner without mixing with cold air. In fact, higher return air temperature can improve the cooling efficiency and capacity of cooling system.

Turn off idle load.

Data centers need to turn off lights when no one is working, which can save about 3% of power and correspondingly reduce cooling requirements by about 3%. In addition, in a data center that has been running for many years, there are often some zombie servers that are still running. This is a quite  common situation. Finding and closing it can save a lot of power and cooling requirements.

common situation. Finding and closing it can save a lot of power and cooling requirements.

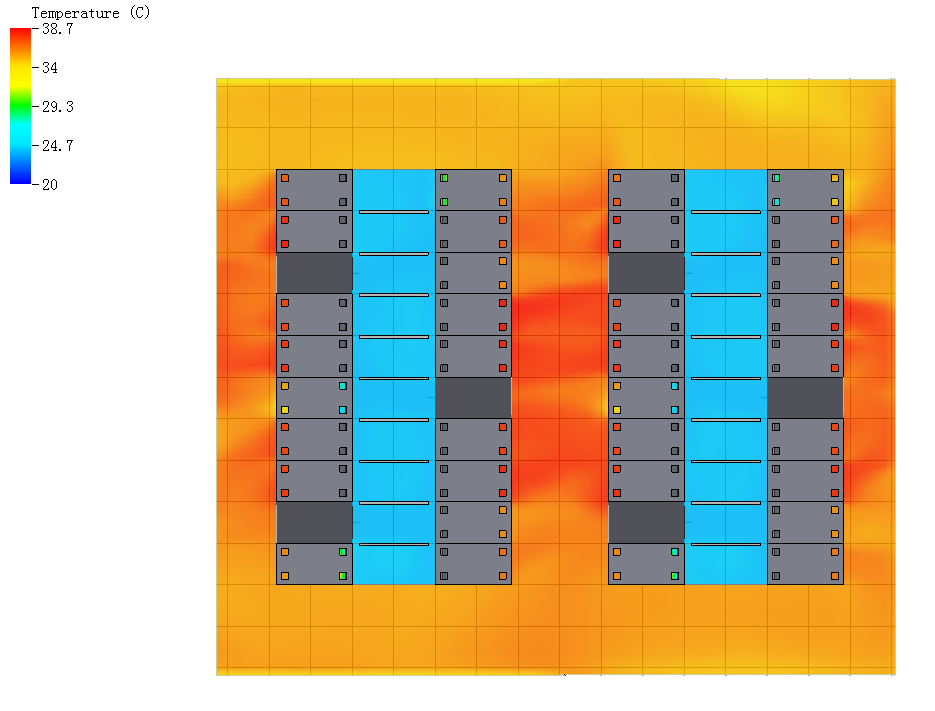

Use CFD software as an optimization guide.

In the case of a conditional, using CFD software to perform simulation tests on the data center can accurately determine how much cooling capacity remains in the data center, where is too cold and overheating, where to add IT equipment, and optimize the data center internal equipment layout.

Summary

By improving the air circulation organization in the equipment room and shutting down idle loads, the utilization rate of the cooling system can be effectively increased by more than 10%. However, when the heat load of the data center exceeds the capacity of the cooling system, only adding the cooling system can solve the problem Therefore, the relationship between heat load and cooling capacity in the data center needs to be checked before implementation.

Download the file here >>Maximize Efficiency of Existing Cooling System in Data Center-2019

Leave A Comment

You must be logged in to post a comment.